Dear Friends,

I’m sharing the ‘Curator’ post for the week. These are riffs on interesting or provocative articles from the ‘artistic/intellectual’ web.

Best,

Sam

END THE PRIZES!

I don’t know what’s going on with the anarchist mood at the Los Angeles Review of Books but I like it! Recently, Stephen Akey wrote on his determination to have nothing to do with the conventional barometers of literary success. Now Dan Sinykin is writing a scathing critique of the whole practice of literary prizes.

Sinykin describes his journey, which runs from being part of the prize matrix - creating a lofty life-vision that culminates in receiving prizes; participating in bitterness and resentment for those who get the prizes that he, in his heart of hearts, feels he deserves; and then piercing the veil and seeing the ludicrousness of prizes altogether. “I became curious about value: how we make judgments about the quality of art,” Sinykin writes. “As a first step, I let go of my attachment to normative standards of success; when I did, when I began reading literature on no major prize jury’s radar, I found elating aesthetic experiences awaiting me, there in the cultural shadows.”

Sinykin‘s journey, to me, sounds exactly like what the process of maturity is - everybody has their own narcissism, and then learns with time to let that go, and then their self-regard takes a different, and more fruitful, direction - in service to their craft; and honoring others whose work they respect. But prizes short-circuit and contradict that whole process of maturation - they make the artist’s trajectory seem not so much about craft, or even the creation of very good work, as about the moment of fulfillment, when one stands basking in applause, exactly as one dreamed about in the most narcissistic moments of one’s childhood.

Mature people who receive prizes are appropriately bemused by them. I remember being very struck by a Charlie Kaufman interview in which he said, sincerely, of the Oscars, that whole thing is so silly, isn’t it, and swiftly discovered that his interviewer didn’t think it was silly at all. An odd moment, of Kaufman trying to be normal and down-to-earth, and his interviewer giving him this starry-eyed look, like, no, you cannot just leave the cult that has taken you in.

Sinykin’s piece pairs nicely with Rubén Gallo’s depiction of the most ludicrous prize of all - the Nobel - and the way that a simple phone call from a ‘very proper gentleman’ to Mario Vargas Llosa turned Llosa’s life, and Princeton University, into a circus. “The Nobel storm reached Princeton,” writes Gallo. “A day didn’t go by without journalists from all over the world showing up and walking across the university’s campus as if it were their own home, even making their way into classrooms where Mario was giving his seminar.” Vargas Llosa seemed to take the whole thing in stride. Gallo was a bit more surprised - particularly when a perfectly pedestrian academic talk, something that was already on Vargas Llosa’s calendar and was in Spanish, suddenly drew an overflowing crowd. “You don’t know about Nobel Prize winners,” the head of Princeton’s, yes, Nobel Prize office told Gallo, setting him straight. “People want to see them, go up to them, touch them.”

So what to make of all that? Well, of course, it’s touching - the apparent majority of the crowds pouring in on Princeton were from the Peruvian community in Paterson, New Jersey, and were just excited for Peru’s international success. And I’m definitely not immune to this sort of thinking. I remember, at one stage as a listomaniac kid, actually trying to memorize the Nobel Prize winners. But, in retrospect, it’s like what a waste of brain space - which could have better been taken up by more baseball statistics.

What’s really going on, I think, is that people don’t actually like to read; find it intimidating; and have trouble forming their own judgments about challenging works of literature. But people do like to be cultured - and so it makes all kinds of social sense to outsource culture to some authoritative body, like a prize committee, like that very proper Swedish gentleman on the other end of the phone. That particular set of psychological and sociological dynamics dominates culture in the era of prizes and is surprisingly far-reaching. The fantasy of prizes is often, as Sinykin bravely documents, what prompts people to get into art in the first place. The prizes have an exponential impact on book sales - really, they drive an industry that is rooted on ‘prestige.’ And the standard ‘cultured person’s’ conversation tends to be in reference to prizes - arguments about whether such and such an artist deserves their status; about whether prizes have really gone to the right recipients. It’s an inexhaustible topic of conversation - makes everybody feel smart; and best of all keeps people from actually having to engage with the ideas, or subject matter, of the work being discussed.

Unfortunately, the prizes themselves are often, really, based on nothing at all. As Sinykin writes, citing a thoroughgoing study of literary awards, “[The study] shows how a small group of writers who served often as judges wielded disproportionate influence and shows how often prizes appear reciprocal: those who give later receive, and vice versa.” In other words, an incredibly corrupt, dirty business.

To all of which, the normal response is - yeah, so what? The prizes are fun, good for the business of literature, and not to be taken too seriously. But the issue is that they are taken very seriously - and so seriously that they tend to crowd out everything else. And the everything else isn’t just the ‘content’ or ‘quality’ of some work of literature - it’s the point of the whole exercise, which is the one-on-one transmission of experience from writer to reader. Basically, we’re all fucking sheep; and social relations, on the whole, encourage us to be sheep. Reading teaches us, quietly, painstakingly, how to not be sheep - how to craft our own judgments, how to form our own idiosyncratic worldview. Prizes - which come from the very heart of the literary industry - reverse that entire process, teach us that there’s some external authority that knows; that reading is a collective, social activity after all; and that the whole process of maturing, of forming one’s own judgments, doesn’t really matter, that everybody at the end of the day is just a child who wants to be clapped at and to shake hands with the King of Sweden. There’s not much room for reforming any of this - the year-end lists, the prizes, aren’t going anywhere; but it is important, for people who take this activity seriously, to create a sort of inner fortress against them, to know that they contradict everything that writing is for.

REVIVE THE CRITICS!

Relatedly, Merve Emre has a beautiful piece in The New Yorker discussing what a critic really is and how that role can be revived.

The critic would seem to be included in my diatribe against prizes - but that’s really because of the sort of debased role that a critic currently occupies. The current conception of the critic, as documented by Emre, is basically an extension of industrialization - and presents itself as a broker for a busy public. Nobody can possibly see or read everything, runs the critic’s raison d’être, so the critic will take that unpleasant task upon themselves, will sift the wheat from the chaff, will determine what is worthy of the public’s attention. And what the critic answers to - ultimately - is a conception of standards. Does such and such a work rise to the level? Does it meet the bar?

That professionalized conception of the critic, anchored on newspapers, is compounded by a separate path towards professionalization, anchored on the university. “Literary evaluation draws authority from the institutions - principally universities - within which it is practiced,” writes Emre, drawing upon the work of John Guillory. That easily-mockable iteration of literary criticism tends to get consumed with its own institutional authority, becoming incessantly concerned with disciplines and epistemology. As Emre writes, “Criticism became more interested in its own protocols than in ‘the verbal work of art.’” And, as Emre writes - in a mirror of the discussion on prizes - the whole discourse of culture shifts into something that’s closer to culture-signaling, the demonstration of ‘cultural capital.’ “To be the kind of person who could translate The Iliad in 1880, or do a close reading of a poem in 1950, or ‘queer’ a work in 2010, was to be manifestly the product of a university, and to reap economic and social rewards because of it,” writes Emre.

Most hand-wringing in the humanities is about the collapse of these two modes of professionalization. The universities can’t provide as many humanities jobs as they at one time seemed to promise. And the newspapers have been cutting back on critics. But, as Emre notes, these professionalized niches never had all that much real economic value. “The hard truth is that no reader needs literary works interpreted for her, certainly not in the professionalized language of the literary scholar,” she writes. And the niches always had a dubious relationship to the actual production of art.

The path forward owes a great deal to the role of the critic in the pre-industrial era when, as Emre writes, “at the height of its cultural renown, criticism was no handmaiden to literature, it was its partner, its equal in substance and style, its superior in its capacity to enter the world beyond the page and the imagination.” Essentially, criticism is also a form of art. Artists go hacking through the psychological undergrowth. Critics are meant to be moving alongside them, trying to interpret and explain, in somewhat plainer language, what the artists are up to - and to point out fresh thickets that the artists may benefit from exploring. The critics I most enjoy reading - people like Milan Kundera, Czeslaw Milosz, J.M. Coetzee - are writers themselves; and their critical work is a completely natural accompaniment to their fictional writing, really an attempt to understand, with a different part of their brain, what they themselves are doing in their fiction.

That is a very different undertaking, personalized and idiosyncratic - “the great critic’s expertise was based on his own authority,” writes Emre - from the current conception of the critic as a sort of urban planner, standing in for the public, setting the artists straight, and relying on the authority of the university or newspaper that employs them. Emre, quoting Magali Sarfatti Larson, blames “the process by which producers of special services sought to constitute and control a market for their expertise,” which entailed, above all, the domestication of criticism by the university system around in the early part of the 20th century. No use bemoaning that development - it helped to employ a lot of people in the humanities - but now that that model is largely breaking down, time to return to something older and wilder and also more in tune with the creative potentialities of the critic.

JUNK SCIENCE?

The Los Angeles Review of Books has another nice piece - this one on Thomas Kuhn and his paradigm shifts. The article’s author, Paul Dicken, summarizes Kuhn’s description of the epiphany that shaped his career.

He had been a graduate student at the time, preparing the obligatory freshman survey course in the history of science, and trying to understand how anyone ever accepted Aristotelian physics. Kuhn vividly recalls gazing out of his window for some time before suddenly grasping that Aristotle had meant something very different by “motion” from his Newtonian successors.

Which is beautifully described. It wasn’t that Aristotle’s ‘motion’ or Newton’s ‘motion’ is better or worse - it’s that, in spite of the surface similarity, they were two completely different worlds with different frames of reference. As Dicken writes, “It was essentially a problem of translation: of not being able to think oneself into an entirely different world.”

Kuhn’s insights weren’t just a sort of marveling at the variegated history of science: they called into question the entire ‘scientific method.’ By the time he had laid out his theories - the insight of that moment in his Harvard office - science no longer seemed to move linearly forward; instead, it mutated, shape-shifted, wrapped itself around fresh bodies of available evidence, adopted very different consensuses from generation to generation about what it was doing. As Kuhn wrote, the move from one scientific paradigm to another tended to be experienced as a “conversion experience.”

Kuhn didn’t necessarily mean that literally, and as documented in the LARB piece, Kuhn in his later writing attempted a bit to stick the genie back in the bottle, to contend that the various scientific paradigms had more in common than not, but my inclination is to take him at his word. Each scientific discipline is built largely on belief - i.e. a social conception of what the world is and what the questions are that need to be solved. Kuhn is particularly insightful on the divergence of cultures - to the extent that various cultures appear not to distinguish between green and blue; that Western palates still cannot quite comprehend a taste like umami. In Kuhn’s terms, the ‘taxonomies’ of the world are strangers to one another; the foundations of how the world is perceived differ in fundamental ways.

That understanding takes one a long way from the alleged facticity of the scientific method - that, with science, you are getting some base-level understanding of truth, with blocks added to other blocks. Which seems hard to do if, over time, the nature of the blocks themselves keeps shifting around. And, with that understanding, very many of the blocks start to seem more like assertions, and more changeable. Aristotle’s view of motion isn’t some partial hypothesis that was later elaborated upon, and corrected, by Newton; Aristotle’s physics spoke to the Greek world in a way that Newton’s spoke to the ‘scientific era.’

Science has had a series of shocks recently that are being only half taken-in - the rise of placebo in studies; the challenges in replicating study results; the recent study revealing a dearth of disruptive science over the last half-century. There are various ways of parsing all of these findings. My light suggestion is that they reveal a fundamental shortcoming in science - and very much as analyzed by Kuhn. That science isn’t linear and isn’t ‘objective.’ And that, in some subcutaneous way, the society is expelling science from its system - that, even as the scientific disciplines become ever more regimented, an ever tighter ship, its ‘discoveries,’ in some crucial way, don’t exactly pass over to the population-as-a-whole and appear less-than-meaningful.

The lines here are blurry because when we talk about ‘science’ we are talking about at least a couple of different things. There is science, which is the disciplined observation and understanding of the world - what used to be called ‘natural science’ - and then there is applied science, ‘tech,’ which is marching forward and appears indestructible. Tech really is a self-contained mechanism; it builds upon itself and on previous discoveries. But science, as observation of the world, does rest on some facticity and verifiability. As beliefs about the world shift - and beliefs inevitably do shift, I would contend, given the psychological needs of a society - science’s observations tend to shift themselves around in ways that belie that foundational claim to objectivity.

A good example of what I’m talking about - and there are many examples - appears in a discussion on free will in Scientific American. The scientific ‘consensus’ on free will, for decades, had been that it doesn’t exist. The evidence for that is largely the result of neuroscience’s insight into ‘readiness potential’ - a series of studies showing that individuals were primed to make decisions, for instance about buying groceries, up to ten seconds before they had even made that decision. The readily-drawn conclusion from these studies was that “people are merely puppets, pushed around by neural processes unfolding below the threshold of consciousness.” But the studies were based on very little. As Alessandra Buccella and Tomaš Dominik, the authors of the Scientific American piece, note, the tests were invariably focused on arbitrary choices, which item to buy, etc. Create different tests involving meaningful choices - for instance, whether to give $1000 to one charity or a different one, and the ‘readiness potential’ evaporates. The test subjects grapple with a difficult choice exactly in the way that individuals with ‘free will’ would be expected to.

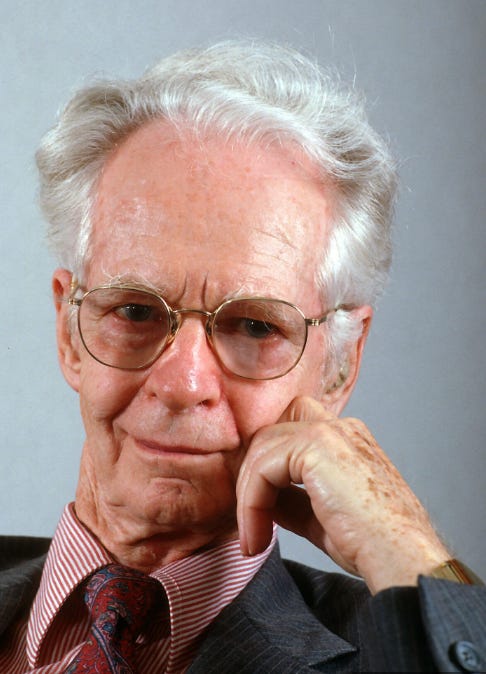

The willingness of the scientific community and of the educated-culture-at-large to accept the absence of free will - it’s usually presented as an assertion - is symptomatic, to me, of the culture’s reliance on B.F. Skinner’s behaviorist model of how human beings function. The simple summary of that view is that human beings are entirely conditioned to make certain choices in given situations - and those choices can be readily manipulated. It just so happens that that view of the world aligns closely with the lab conditions that Skinner experienced for the majority of his career; and there comes to be a symbiosis between domains. The world is constructed to look more like a lab, with a narrower array of choices placed in front of an individual; and then, sure enough, behavioral analysis proves adept at predicting an individual’s choices.

Behaviorism, as a paradigm and a consensus, does start to look like facticity when applied to a world in which we have our consumer options, our gadgets, in front of us all day long. But create different conditions - for instance, the moral quandaries of the study discussed in Scientific American - and, suddenly, reality shifts, a quaint concept like free will comes back into play, and some different paradigm, which may not be behaviorism and may not even be ‘science’ at all, is called upon to understand it.

GOD NEVER DIED AT ALL

If science turns out to be just another belief, and in important ways to be weakening its social hold, the question is what takes its place. My answer would be religion - but not exactly religion in its stuffed shirt, fill-the-church-pews iteration. More religion in the sense that individuals, and communities, create their beliefs about the world; and align those beliefs with the sorts of fulfilling lives that they want to live.

The great lesson of the 20th century - which really would have stunned the progressive orthodoxy of the 19th - is the surprising comparability of modes of faith, including very traditional ones, with the modern world. An article in The Hedgehog Review, responding to Dominic Green’s The Religious Revolution: The Birth of Modern Spirituality 1848-1898, makes the case for a recasting of our understanding of religion reaching all the way back into the anomistic 19th century.

The usual narrative, of course, is that Darwin published The Origin of Species, at which moment the ‘sea of faith’ receded, ‘God died,’ and we suddenly found ourselves in a cold, materialist universe and a kind of permanent secular ‘homelessness.’ But, as Richard Hughes Gibson writes in The Hedgehog Review, that narrative fails to account for a whole host of phenomena. “[The standard view] is obviously not a satisfactory conclusion, and it speaks to the inadequacy of the story of the nineteenth century,” Hughes Gibson writes.

The better-known narrative of the ‘Counter-Reformation’ - religious resistance to secularism - focuses on surprising instances of the revival of organized religion, in America’s evangelical movement, in the post-Soviet resurgence of Russian orthodoxy, in the rise of Islam, etc. Green and Hughes Gibson emphasize, however, the spiritual movements of the late 19th century, which tend to be discussed, when discussed at all, as embarrassing interludes in the lives of famous people, but in Green’s narrative come front and center. “The 19th century was an age of frantic religious creativity in which new ideas didn’t just challenge old doctrines; they also sparked novel varieties of religious experience that remain a part of our spiritual landscape.….and in which the weakening of organized religion liberated the religious impulse,” writes Green.

Hughes Gibson, understandably enough, chides Green for not being more specific about the parameters of his argument. What exactly is spirituality? What is so precious about the half-century, 1848 to 1898, that Green makes the frame of his narrative? But the whole point is that spirituality is elusive, unorganized, disparate. Brian Muraresku calls it “the religion with no name,” which wends its way from antiquity to the present. One of the favorite quotes I’ve heard in my life was from a healer who described “religion as the McDonalds of spirituality.” In other words, once spirituality becomes organized, easily measurable or nameable, it stops being itself - and this for the very good reason that true spirituality is understood to be completely individual and idiosyncratic, a path that a person follows throughout their life, as opposed to a set of tenets that can be readily formulated and disseminated. But this is not to say that spirituality is powerless or inept; it just tends to move in quiet, subterranean ways; and much of the story of modernity is, as in Green’s narrative, the underground life of spirituality, which then emerges in plain sight in surprising periods of fluorescence, in the 1840s or 1960s, for instance. When those periods fade out, it’s not that spirituality is somehow disproven - and, incidentally, there is no way to disprove the search for meaning - as that attention moves elsewhere, spiritual endeavors go underground again.

The more I understand about history - and about neglected, obscure corners of history - the more I realize that history is not particularly linear at all. It’s really not as if there was religion and then it was supplanted by science. These same sorts of conversations have been happening for a long, long time. In some eras one strand seems to predominate; in others, something different. But that’s not to say that anybody’s winning, that anybody’s closer to truth.

I could write 175,000 words about literary awards and their functions and dysfunctions. I've served on a few big-time award committees and the process involves passion, exhaustion, anger, pride, jealously, love of literature, boredom, bemusement, impatience, and sooooooo much nepotism and corruption ranging from ordinary to epic. As for critics, I ended my subscription to the NY Times after 23 years because the arts reviews had become mere recaps of the artists' politics.

This summarised my ambivalence with Noble and other big prizes (as a reader):

"Basically, we’re all fucking sheep; and social relations, on the whole, encourage us to be sheep. Reading teaches us, quietly, painstakingly, how to not be sheep - how to craft our own judgments, how to form our own idiosyncratic worldview. Prizes - which come from the very heart of the literary industry - reverse that entire process, teach us that there’s some external authority that knows; that reading is a collective, social activity after all; and that the whole process of maturing, of forming one’s own judgments, doesn’t really matter, that everybody at the end of the day is just a child who wants to be clapped at and to shake hands with the King of Sweden"

And thanks for the links to Gibson's essay and agree about non-linearity of history. One negative aspect of modern (post 1940-1950 and onwards; for instance, misappropriating and misinterpreting ideas such as "Protestant Ethics" ) sociological and historical theory is that they painted a nice, clean narrative that is easier to understand and categorise. We are realising, more and more that theorising is one thing but the real world challenges those theories all the time. Stories, narratives, personal experiences with grand theories can show those obscure corners of the history.